15 AEO Metrics Every CMO Should Track in 2026

Answer Engine Optimization

Dec 30, 2025

Your marketing dashboard was built for a world where humans clicked, scrolled, and decided. A growing share of your buyers are doing none of that. An AI is doing it for them.

You have no idea how you're performing in that channel because you're not measuring it.

Here are the 15 metrics that close that gap — what they mean, why they matter, and what a bad number is telling you.

1. Brand Visibility Rate

The percentage of relevant AI-generated responses where your brand is mentioned at all. Run 50–100 category queries across ChatGPT, Perplexity, Gemini, and Bing. Count appearances divided by total responses.

Most brands sit at 20–40% and assume they're doing fine because their SEO numbers look good. They're measuring the wrong channel.

The more important number: visibility rate on transactional queries specifically. A brand can be well-known in AI responses to research questions and completely absent when someone asks "what should I buy." If that gap is wide, awareness isn't the problem — trust is.

2. Transactional Citation Share

Your share of citations on buying-intent queries — "best [product] for [use case]," "where to buy [X]," "compare [A] vs [B]" — relative to competitors tracked in the same prompt set.

This is the metric CMOs should actually be optimizing for. Not overall visibility. Not informational mentions. The moment when AI is actively routing a buyer toward a decision.

If you're not segmenting your prompt set by intent and measuring this separately, your headline visibility number is hiding the gap that costs you revenue.

3. Average Citation Position

Where your brand appears in a response when it does appear. First recommendation, second, buried fifth in a list of alternatives.

Being mentioned isn't the same as being recommended. Position one in an AI response captures disproportionate attention — the same dynamic as position one in search results, except there's no page two to scroll to. If your average position on transactional queries is above three, you're effectively background noise.

4. Sentiment Score

The tone AI models use when describing your brand — positive, cautious, or subtly negative framing.

AI models inherit sentiment from training data: reviews, forums, news coverage, Reddit threads. If that source material skews negative for your brand, the bias surfaces in AI responses in ways that don't show up in your NPS score or your customer surveys.

Don't just look at the aggregate number. Read the raw responses. "Brand X is a popular option, though some customers report..." is a very different recommendation than "Brand X is consistently rated highly for..."

5. Source Citation Rate

How often AI models link to or attribute content directly from your domain when answering category-relevant queries.

A mention means the model knows your name. A citation means the model trusts your content enough to direct someone there. These are not the same thing, and most monitoring tools blur the distinction. Insist on tracking them separately.

Citation rate is also a leading indicator: it tends to rise before Brand Visibility Rate does. If you're building an AEO content program, this is the first signal you're getting traction.

6. Entity Association Accuracy

Whether AI models correctly describe your brand — right categories, right products, right attributes, right positioning.

This one CMOs almost never check. Run five prompts asking AI to describe your brand directly. Compare the output against your actual positioning. Most brands find at least one significant inaccuracy: an outdated product focus, a geographic limitation that no longer applies, a competitor framing that's stuck in the model.

Misassociation is as damaging as invisibility. Every AI-mediated discovery that misrepresents you is quietly undermining your sales funnel.

7. Content Coverage Score

The percentage of queries in your category where your owned content is among the cited sources — not just your brand name, but a specific URL from your site.

This measures the gap between brand presence and content authority. You can have strong Brand Visibility Rate and near-zero Content Coverage Score if AI knows your name but never uses your content to answer questions.

Low Content Coverage Score means your content isn't structured for AI comprehension: too thin, not factually dense enough, or missing the question-answer format that models pull from.

8. Prompt Coverage Gap

The queries in your category where competitors are getting cited and you aren't.

This is different from Share of Voice, which measures relative volume. Prompt Coverage Gap maps the specific questions your brand is losing. Those are the exact articles, FAQs, and comparison pages you need to build.

Most teams skip this and write content based on SEO keyword research. That produces content that ranks on Google and gets ignored by AI.

9. AI-Referred Traffic Volume

Sessions arriving from AI platforms — tracked via referrer detection in GA4 or UTM parameters.

This was up 1,247% year-over-year in 2025, per Adobe Analytics. Without clean attribution, that growth is invisible in your reports. You're seeing it as "direct" or lumped into organic, which means you can't measure whether your AEO efforts are working.

Set up a dedicated AI Traffic segment in GA4. Filter by referrer: perplexity.ai, chat.openai.com, gemini.google.com. Track it weekly. Once you can see it, you can grow it.

10. AI Traffic Conversion Rate

The conversion rate of AI-referred sessions compared to your organic baseline.

AI-referred visitors tend to convert at higher rates than traditional search traffic — they arrive post-research, having already had their questions answered by the AI before clicking. If your AI traffic converts poorly, the problem isn't the channel. It's the landing experience: the page the AI sends them to doesn't match the context in which you were recommended.

AI-referred traffic with a bounce rate above 70% is a landing page mismatch problem, not a visibility problem.

11. Structured Data Coverage

The percentage of your key revenue-generating pages — product pages, category pages, PDPs — with accurate, complete schema markup.

This is how AI models understand what a page is, what it contains, and what actions are possible on it. A product page without schema is a menu with no prices and no way to order. You're asking the AI to guess.

Run your top 50 URLs through Google's Rich Results Test right now. The results will be uncomfortable.

12. AEO Score (0–100)

A composite score measuring how ready your site is to be understood and acted on by AI systems — structured data, content quality, technical accessibility, entity clarity, agent navigation combined into a single number.

This is the Lighthouse score for the agentic web. Below 40 means critical gaps. 40–70 means functional with significant friction. 70+ is the competitive baseline. 85+ is category leader.

Its main value is giving your engineering and marketing teams the same number to optimize toward. Without it, everyone's working on different things and no one can prove whether it's working.

13. Agent Task Success Rate

The percentage of your core user flows — search, filter, product detail, add to cart, checkout — that an AI agent can complete without breaking or abandoning.

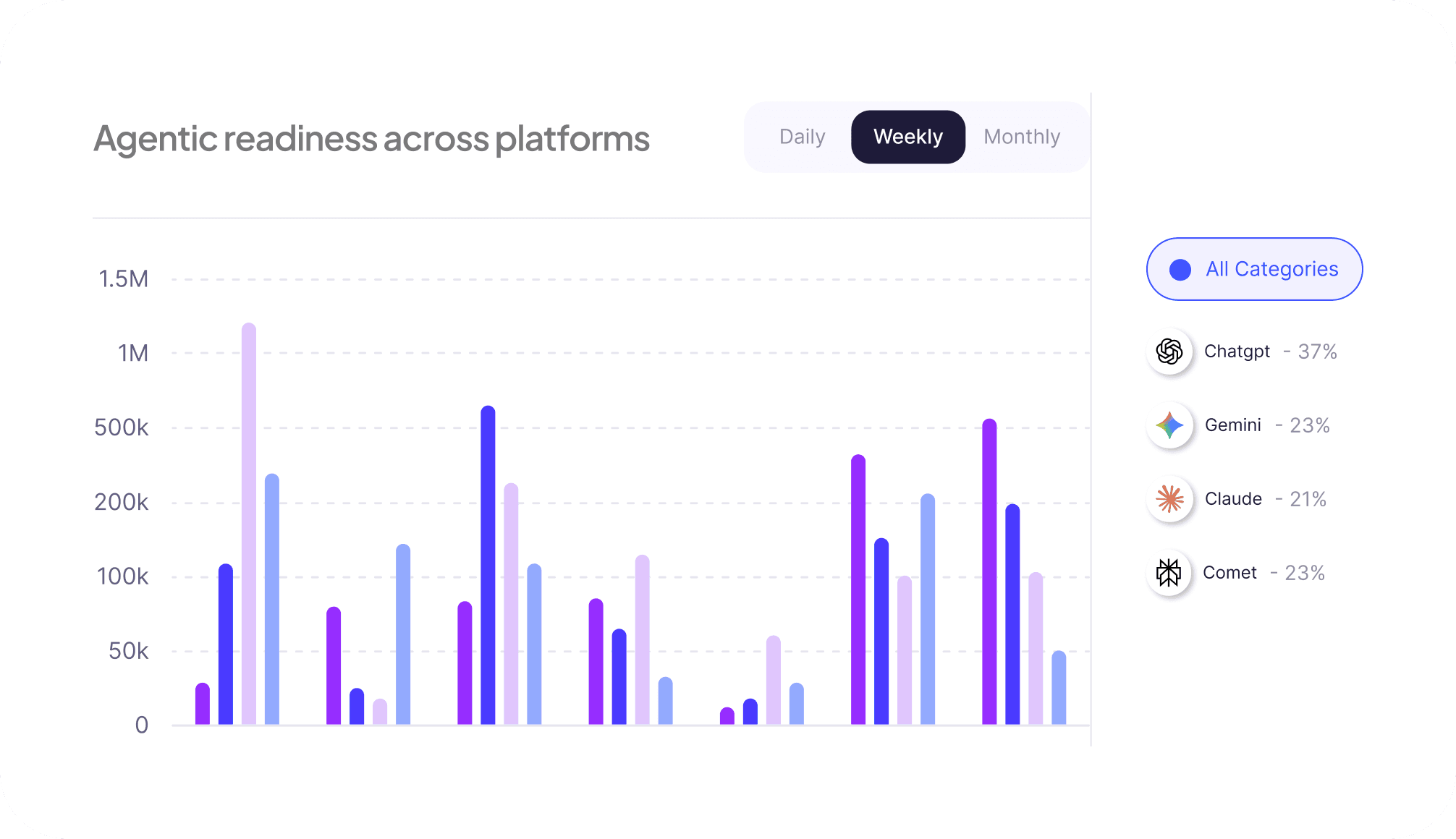

This is where "AI-visible" and "AI-transactable" split into two completely different performance tiers. ChatGPT's shopping features, Perplexity Comet, and similar tools are actively executing purchase flows on behalf of users right now. If an agent discovers you but can't complete the transaction, it moves to the competitor it can transact with.

Test this with a headless browser framework against your core flows. Below 60% completion rate is a direct revenue leak.

14. Friction Score

The median number of steps an AI agent takes to complete a task, plus the rate of retries and dead ends encountered along the way.

Agent Task Success Rate tells you if agents finish. Friction Score tells you what finishing actually costs them. A checkout flow that technically completes in 14 steps with two retries is not the same as one that completes in 4 steps cleanly — even if both show up as "success" in your completion rate.

Agents abandon high-friction experiences faster than humans do, without leaving any signal you can see in your analytics.

15. Affordance Coverage

The percentage of your core site functions — search, filtering, checkout, account management — that are exposed as machine-actionable surfaces an AI agent can call reliably.

This is the ceiling metric. It measures the gap between what your site does for humans and what it exposes to agents. Most e-commerce sites sit at 30–40% Affordance Coverage: the agent can browse, but it can't filter by size, can't apply a discount code, can't complete a guest checkout without breaking.

Closing this gap is a product engineering problem, not a marketing one. But it's a marketing-funded priority, because the revenue impact lands on your number.

Where to Start

Don't try to track all 15 at once. Metrics 1–7 require monitoring tools and content work. Metrics 8–10 require GA4 configuration. Metrics 11–15 require engineering involvement.

Start with your Brand Visibility Rate and Transactional Citation Share today — you can get a rough baseline manually in a few hours. Everything else builds from knowing where you actually stand.

The CMOs who have all 15 of these tracked by Q3 will be the ones who can explain their AI channel performance to the board. The ones who don't will be explaining why revenue is leaking from a hole they couldn't see.

Geck audits e-commerce sites for AEO and AXO readiness — giving you your current baseline across all 15 of these metrics within 48 hours.